Now a days we all rely on artificial intelligence. It recommends our movies, filters our spam, and even helps doctors diagnose diseases. But in most cases, AI functions as a “black box”. We feed it data, it gives us an answer, and we have little to no idea how it arrived at its conclusion.

For low-stakes tasks, that’s fine. If a streaming service gets a recommendation wrong, you just scroll to the next movie.

But what if that black box is deciding who gets a home loan, who gets a job interview, or what’s in a medical scan? What if a generative AI, asked to summarize a legal document, “hallucinates” and invents a legal precedent? Suddenly, not knowing “why” is a massive problem.

This is where Explainable AI (XAI) comes in.

This guide is for beginners and business leaders alike. We’ll break down, in simple terms, what XAI is, how it works, and why in 2026 adopting it is no longer a strategic option but a non-negotiable business imperative.

What is Explainable AI (XAI)? A Simple Guide

Quick Answer: Explainable AI (XAI) is a set of tools and techniques that make AI models transparent, allowing humans to understand why and how an AI made a specific decision.

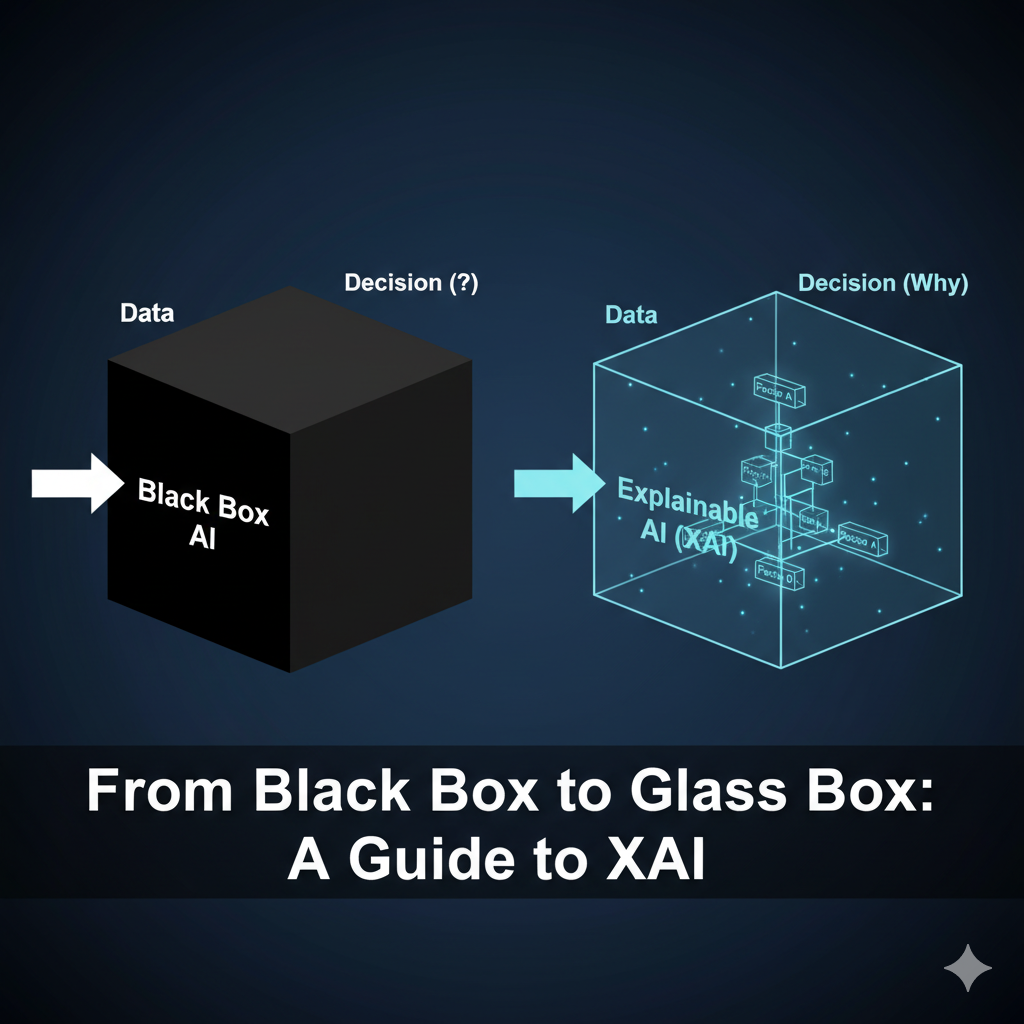

At its core, XAI is about turning “black boxes” into “glass boxes.”

- A Black Box (like most complex AI) gives you an answer but hides its internal logic. You can’t see the “why.”

- A Glass Box (an XAI system) not only gives you an answer but also “shows its work,” allowing you to inspect the process and understand the key factors that led to the outcome.

Early AI models, like simple linear regression, were naturally explainable. A bank could easily say, “You were denied this loan because your credit score of 580 (Factor A) and your 45% debt-to-income ratio (Factor B) were the main drivers.”

But modern “deep learning” and “generative AI” models are vastly more complex, with billions of parameters. Their internal workings are so intricate that even the data scientists who build them can’t pinpoint the exact reason for a single decision. XAI provides the tools to translate that complexity back into human-understandable terms.

Why is Explainable AI (XAI) Non-Negotiable for Business in 2026?

Quick Answer: Explainable AI (XAI) is non-negotiable by 2026 due to three forces: massive reputational risk from biased AI, severe financial penalties from new laws like the EU AI Act, and the clear business ROI of building customer and employee trust.

For years, Explainable AI(XAI) was a “nice-to-have.” By 2026, it’s a “must-have” for the entire business.

1. The Staggering Cost of AI Bias: Reputational Risk

When you don’t know why your AI is making decisions, you can’t control for the biases it may have learned. This has already led to PR nightmares and real-world harm.

- Discriminatory Lending: In 2024, investigations into mortgage lending algorithms revealed they were denying loan applications from minority applicants at significantly higher rates than white applicants, even when key factors like income and debt were similar. The models had learned to penalize users based on proxies for race, like the neighborhood they lived in.

- Biased Healthcare: A widely used US healthcare algorithm was found to be systematically biased against Black patients. The model used “healthcare cost history” as a proxy for “health need.” Because Black patients historically spent less (due to various systemic factors), the AI concluded they were healthier, reducing the number of Black patients identified for critical care by over 50%.

- Unfair Justice: The COMPAS algorithm, used in the U.S. justice system, was found to be twice as likely to incorrectly flag Black defendants as high-risk for re-offending compared to white defendants.

These failures aren’t just technical; they are brand-destroying. They erode customer trust, damage your company’s reputation, and can lead to significant revenue loss.

The New Frontier: Generative AI Risk

The “black box” problem is even more dangerous with Generative AI (like LLMs). In 2025, researchers found AI models used in corporate recruiting were scoring candidates with natural Black hairstyles as “less professional” than those with straight hair. This isn’t just a technical glitch; it’s the AI laundering and scaling real-world stereotypes, creating a massive new surface area for reputational and legal risk.

2. The Regulatory Hammer: Financial Risk & The EU AI Act

Governments are no longer treating AI as a “wild west” technology. Regulation is here, and it has teeth.

The most significant development is the EU AI Act, the world’s first comprehensive AI regulation. This law, with enforcement ramping up through 2026, mandates transparency and explainability for any “high-risk” AI system—a category that includes AI used in:

- Hiring and worker management

- Credit scoring and financial services

- Education and vocational training

- Law enforcement and justice

- Medical devices and critical infrastructure

The penalties for non-compliance are severe:

Fines can be as high as €35 million or 7% of a company’s total worldwide annual turnover, whichever is higher.

For businesses operating in or serving the EU, “we don’t know how it works” is no longer a valid legal or financial defense. It’s a confession of liability.

3. The ROI of Trust: The Business Opportunity

This isn’t just about avoiding disaster. Implementing XAI is a proactive strategy that drives real business value.

- Builds Trust and Adoption: The number one barrier to AI adoption is a lack of trust. A doctor is unlikely to use an AI that just says “this patient is high-risk for sepsis.” But they will use an AI that says, “This patient is high-risk because their lactate levels are rising, their blood pressure is dropping, and their age is a key factor.”

- Makes Models Better (Debugging): Explainable AI (XAI) is the ultimate debugging tool. By seeing why a model is making strange predictions, data scientists can identify issues like data drift, detect hidden biases, and fix errors before they are deployed to customers.

- Boosts ROI: By enhancing trust, accelerating adoption, and reducing costly errors, Explainable AI (XAI) directly improves the return on investment (ROI) for your AI initiatives. It allows you to confidently automate high-volume decisions (like approvals or triage) because you have an audit trail for every single decision.

How Does XAI “Show Its Work”? (3 Simple Methods)

You don’t need to understand complex math to understand the concept behind XAI. Here are three of the most popular methods, explained for beginners.

1. LIME (Local Interpretable Model-agnostic Explanations)

- What it is: A technique that explains one prediction at a time.

- Simple Analogy: Imagine your complex AI is a massive, winding curve. You can’t explain the whole curve at once. LIME works by taking a tiny, flat “piece of glass” and pressing it against one single point on that curve to get a simple, “local” approximation.

- In Practice (Loan Denial): You ask LIME, “Why was this customer’s loan application denied?” LIME will tell you: “For this specific customer, the three biggest factors were (1) High Debt-to-Income Ratio (48%), (2) Low Credit Score (610), and (3) Number of Recent Credit Inquiries (5).”

2. SHAP (SHapley Additive exPlanations)

- What it is: A more comprehensive approach based on game theory that shows how much each feature contributed to the prediction.

- Simple Analogy: Imagine your AI’s prediction is the final “score” of a team game. SHAP’s job is to fairly determine how much each “player” (each data feature, like ‘age’, ‘income’, ‘zip code’) contributed to that final score.

- In Practice (Global Explanation): SHAP can add up contributions across thousands of “games” to show you which features are the most important for the model overall. A SHAP plot might show a leader that ‘Annual Income’ is the biggest driver of approvals, while ‘Debt-to-Income Ratio’ is the biggest driver of denials.

3. Counterfactual Explanations

- What it is: This intuitive method explains a decision by showing what would need to change to get a different outcome.

- Simple Analogy: It’s the “What If?” scenario.

- In Practice (Job Application):

- Decision: “This candidate was automatically rejected.”

- Counterfactual Explanation: “This candidate would have been advanced to the interview stage IF they had ‘PMP Certification’ OR ‘3+ years of management experience’.”

Your 4-Step Explainable AI (XAI) Action Plan for 2026

XAI is not just a technical project; it’s a core business strategy. Here is a 4-step plan to get started.

- AUDIT Your AI Landscape: Map out every AI model currently in use or in development in your organization.

- PRIORITIZE by Risk: Classify each model as low, medium, or high-risk. A movie recommender is low-risk. A hiring or lending tool is high-risk.

- INTEGRATE Explainability: Mandate that all high-risk and medium-risk systems must have Explainable AI (XAI) tools and logging integrated from day one. This is not an add-on; it’s a core design requirement.

- TRAIN Your People: Explainable AI (XAI) is not just for data scientists. Your legal, compliance, and front-line teams need to be trained on how to interpret and act on AI explanations.

The Future is a Glass Box: Your Next Steps

Explainable AI (XAI) is the crucial next step in the evolution of artificial intelligence. It’s the bridge that takes AI from being a powerful but inscrutable “black box” to a transparent and trustworthy “glass box” partner.

As of 2026, the business case is closed. Driven by customer demands for transparency, regulatory hammers like the EU AI Act, and the clear ROI of building trust, XAI is no longer a technical-level “nice to have.”

It is a non-negotiable, C-suite-level strategic imperative.

Frequently Asked Questions (FAQ) about XAI

Q1: What is the main goal of Explainable AI (XAI)?

A: The main goal of XAI is to build trust and transparency in AI systems. It allows humans to understand, interpret, and challenge the decisions made by complex “black box” models.

Q2: What is the difference between LIME and SHAP?

A: The simplest difference is scope. LIME is generally used for local explanations (why one single prediction was made). SHAP is more comprehensive and can provide both local explanations and global explanations (which features are most important to the model overall).

Q3: Why is ‘black box’ AI a problem?

A: “Black box” AI is a problem because it’s impossible to know why it makes a decision. This can hide dangerous and costly issues like discriminatory bias, factual errors (hallucinations), and security vulnerabilities, making it a massive legal and reputational risk.

Q4: Is XAI required by law?

A: Yes, in many high-risk cases. The EU AI Act, for example, mandates transparency and traceability for AI systems used in hiring, finance, and other critical sectors. This makes XAI a legal necessity for companies operating in or serving the EU.