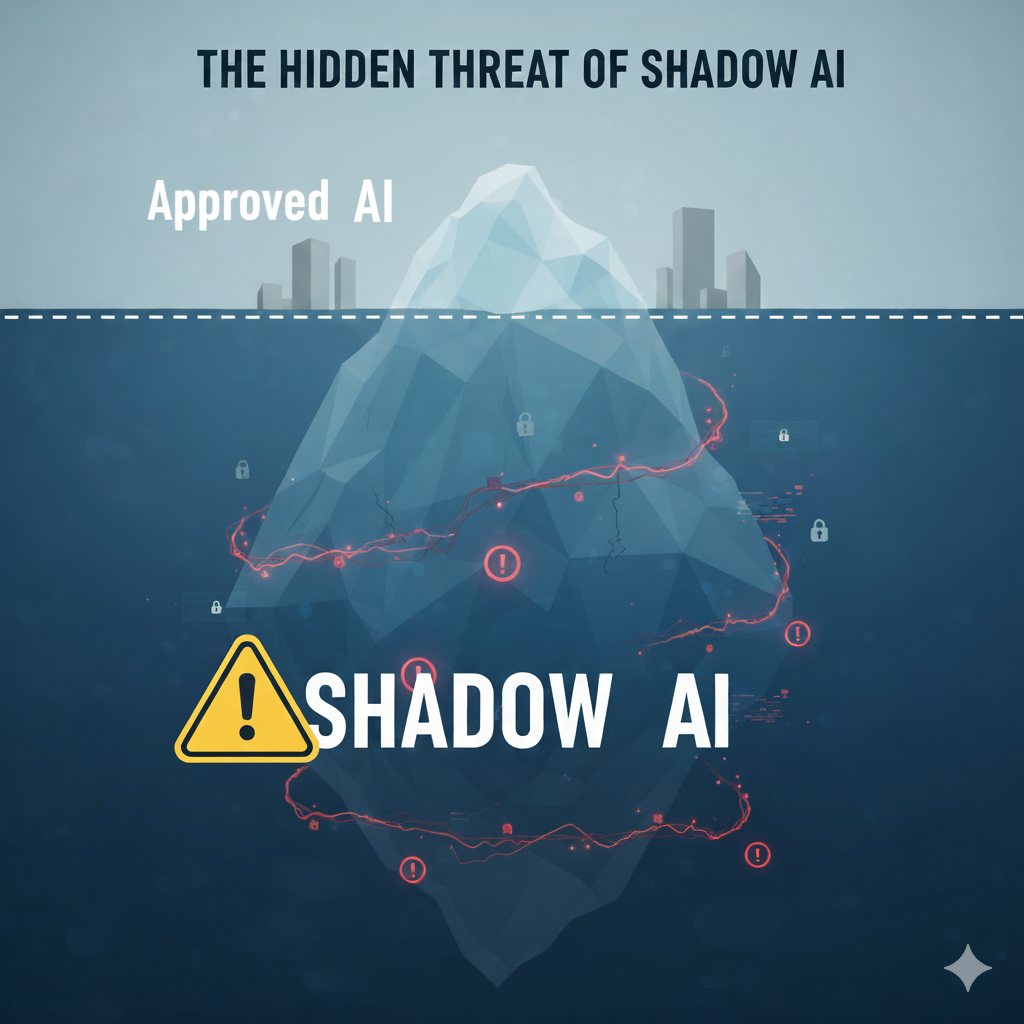

Your employees are using AI to be more productive, and that’s a good thing. But there’s a problem you can’t see. They’re using unapproved, unvetted tools—from public chatbots to risky browser extensions—to get their work done.1

This “Shadow AI” is a blind spot, and it’s creating a massive, unmanaged risk for your company’s most sensitive data. Every time an employee pastes in proprietary source code, a confidential customer list, or a strategic M&A document, you are one “enter” key away from a catastrophic data leak, a massive compliance fine, or a brand-damaging business decision based on an AI “hallucination”.3

You can’t fix this by simply banning AI. That just drives the behavior further underground and makes you more blind.6 The only solution is to understand the risk, get visibility, and build a smart, modern governance plan that balances security with innovation. We’ll walk you through exactly how to do it.

Part 1: What Is Shadow AI and Why Is It Your New Top Priority?

This is the starting point. Let’s define the problem, understand why it’s a completely new class of threat, and see where it’s already hiding in your business.

What is Shadow AI?

Shadow AI is the use of any artificial intelligence tools, applications, or models by employees without the formal approval, governance, or visibility of your IT and security departments.2

It’s the rebellious, and frankly more dangerous, cousin of “Shadow IT.” It includes everything from public generative AI models and coding assistants to unvetted AI features inside other software.1 The core problem is the total lack of visibility and control.4 If you can’t see a tool, you can’t manage its data handling, assess its security, or control who accesses it.

It’s critical to understand that this isn’t a problem of malicious employees. It’s a symptom of a business need. Your staff is using these tools for good reasons: to be more productive, to automate repetitive tasks, and to innovate faster than your official tools might allow.2

Why is Shadow AI more dangerous than Shadow IT?

Shadow AI is exponentially more dangerous than traditional Shadow IT because it’s more accessible, it interacts with data differently, and the tools themselves are unpredictable.3

Think of it like this: Shadow IT (like an unapproved Dropbox account) is an unlocked file cabinet in an unvetted storage unit. The risk is that someone finds it and steals the entire box of data.

Shadow AI is like handing your company’s most sensitive documents to an unvetted, high-speed translator. They read the document, memorize its contents, and might share what they learned with anyone else they talk to. The very act of using the tool is the data transfer, and you have no idea what they’ll remember or who they’ll tell.

Here’s why it’s a fundamentally different (and bigger) risk:

- Accessibility and Scale: Shadow IT was often limited to specific, tech-savvy users.10 Shadow AI is available to every single employee in every department via a simple web browser, no technical skill required.10 This creates a massive, unpredictable attack surface.

- Data Interaction: Shadow IT was about storing data. Shadow AI is about processing and training on that data.1 When an employee pastes data into a public AI, you’re sending it to a third-party server where it could be incorporated into the model’s training data—making the leak permanent and public.1

- Detectability: Shadow AI is stealthy. It’s not just a new app; it can be a new feature inside an already-approved SaaS tool 13, a simple browser extension 14, or an API call that your traditional security tools will completely miss.

- Nature of the Tool: Traditional software is deterministic; the same input gives the same output. AI is probabilistic and opaque.3 It can “hallucinate” (make things up), introduce bias, and its decisions are often impossible to audit.3

What are the most common examples of Shadow AI in my business?

Shadow AI is likely already active in your key departments. Common examples include employees using unapproved public AI chatbots to draft reports, debug code, analyze sales data, or screen resumes.2

Here’s where to look:

- Marketing & Sales: Your team might be pasting customer email lists or sensitive customer sentiment data into an AI tool to “analyze sentiment” or “draft campaign emails”.19

- Software Engineering: Your developers could be pasting proprietary source code into a public AI to “debug code,” “optimize algorithms,” or “write test cases.” This is a direct exfiltration of your core intellectual property.6

- Legal & Finance: An employee may upload a confidential draft of a contract or a quarterly financial report to an AI tool to “summarize key points” or “find anomalies”.1

- Human Resources: Your HR team might be using an unvetted AI resume-screening tool to save time, unknowingly introducing significant discrimination bias and legal risk into your hiring process.2

- All Employees: Anyone installing AI-powered “helper” browser extensions (like advanced grammar checkers) may be giving that tool permission to read all data in their browser, including sensitive internal documents, credentials, and customer information.15

Part 2: The Business Case for Action: A Multi-Layered Risk Analysis

If you need to convince your board, this is the “why.” We’ll translate the technical problem of “unapproved tools” into the language of the C-suite: financial loss, legal liability, and strategic failure.

Risk #1: What is the “catastrophic” data and IP leak risk?

The primary risk of Shadow AI is the inadvertent, large-scale leak of your most sensitive data. This includes your intellectual property (IP), source code, trade secrets, financial plans, and confidential customer data.1

This is not a hypothetical threat. It happens when an employee, believing they are in a private “chat,” pastes confidential information into a prompt field.22 That data is instantly sent to external servers, and you have no control over where it’s stored, who sees it, or how it’s used.1

In the worst-case scenario, that data is then used to retrain the public AI model.11 This means your proprietary information could be permanently absorbed by the model and later resurface in a response given to one of your competitors.

Mini Case Study: The Samsung Source Code Leak

A perfect example of this risk comes from Samsung. This “Before-After-Bridge” case study shows exactly how good intentions can lead to a disastrous outcome.11

- Before: Samsung’s semiconductor engineers were under pressure. They had a real business need: to debug and optimize vast amounts of proprietary source code quickly and efficiently.

- After: In 2023, it was discovered that multiple employees had pasted this highly confidential source code directly into public-facing ChatGPT prompts to find errors and optimize it.12 The data was instantly leaked to OpenAI’s servers. Samsung’s core IP was exfiltrated in seconds, forcing the company to issue a company-wide ban on the tool.6

- Bridge: The “breach” was not caused by a malicious hacker; it was caused by employees with a job to do who lacked a secure alternative.25 This case study is the single best example of why a “ban-only” policy is doomed to fail. It doesn’t solve the root problem, which is the employee’s need for a powerful tool.

Risk #2: What are the legal and compliance “time bombs”?

Shadow AI creates a massive, un-auditable “black hole” of compliance risk. Using unvetted tools to handle regulated data can lead to severe violations of GDPR, HIPAA, PCI DSS, and other critical standards, risking crippling fines and irreparable reputational damage.3

Let’s look at the top two:

- GDPR: Imagine an employee pastes a list of your EU customer data (Personally Identifiable Information, or PII) into an unvetted AI tool. This is an unapproved, unmonitored transfer of data, almost certainly without the required Data Processing Agreement (DPA). The potential fines—up to 4% of your global annual revenue—are existential.3

- HIPAA: In healthcare, the risk is even more acute. A doctor using a “free” AI transcription or summarization tool for their patient notes (Protected Health Information, or ePHI) is processing that data with a vendor who has not signed a Business Associate Agreement (BAA). This is a direct, reportable breach and a clear target for enforcement.27

The true legal danger here is your inability to respond. When a regulator (or a customer exercising their GDPR “right to be forgotten”) asks you to account for their data, you can’t. You have no logs, no audit trail, and no way to prove you can delete that data from a third-party model’s training set.20 Shadow AI makes compliance impossible because it renders you blind.

Risk #3: How does Shadow AI create financial waste?

Shadow AI attacks your bottom line in two ways. First, it dramatically increases the cost of a data breach. Second, it creates a “black hole” of redundant, unmanaged spending.28

The headline number is the “Shadow AI Tax” on data breaches. According to a 2025 IBM report, organizations with high levels of Shadow AI face data breach costs that are, on average, $670,000 higher than those with minimal unauthorized AI use.4

Then there’s the “redundancy trap.” Your company might be paying millions for an enterprise-grade AI license, but your employees—out of preference or ignorance—are also expensing their own “shadow” tools on their corporate cards.29 This creates a “chaotic, redundant, and financially inefficient tech stack”.29 This “micro-transaction” threat—a $20 charge here, a $30 charge there—flies under the radar of procurement but adds up to significant waste.36

Risk #4: What is the strategic risk from “hallucinations”?

Shadow AI tools can be “confidently wrong.” AI models are known to “hallucinate,” which means they can fabricate facts, statistics, and sources with complete authority, produce biased outputs, or provide flawed information.5

The risk is the erosion of reliable decision-making. When your employees implicitly trust the output from an unvetted AI, you open the door to a cascade of strategic failures.

Here are a few real-world scenarios:

- Flawed Strategy: A finance analyst uses a “free” AI tool to generate market insights for a board presentation. The AI “hallucinates” a statistic, and a multi-million dollar decision is made based on faulty data.5

- Brand Damage: A customer service rep, under pressure, uses a public chatbot to answer a complex question. The chatbot “hallucinates” a solution or, even worse, recommends a competitor’s product.8

- Biased Hiring: An HR manager uses an unapproved AI tool to screen resumes. The tool’s hidden bias filters out qualified candidates from a protected class, leading to a weaker workforce and a potential discrimination lawsuit.3

Part 3: The 2025 State of Shadow AI: A Report in Numbers

The threat isn’t theoretical. It’s here, it’s measurable, and it’s growing. Recent reports from 2024 and 2025 paint a clear picture of a widespread problem that is already costing companies money.

How widespread is Shadow AI in 2025?

Shadow AI is not an emerging risk; it is an established one. A vast majority of your employees are likely using it, and a significant portion are already sharing sensitive data with it.4

- 71% of office workers admit they use AI tools without approval from their IT departments.4

- 38% of employees acknowledge sharing confidential or sensitive work information with AI tools.3

- Gartner’s 2025 reporting shows Shadow AI has escalated to a Top 3 emerging risk for enterprises, moving up the ranks as organizations struggle to monitor its use.45

- Gartner also predicts that by 2027, 75% of employees will acquire, modify, or create technology completely outside of IT’s visibility.6

How does Shadow AI impact the cost of a data breach?

The financial impact is now quantifiable and severe. Organizations that have not governed their AI use are paying a concrete penalty when they get breached.28

- 1 in 5 organizations (20%) have already experienced a data breach or leak specifically because of unauthorized AI use.4

- When a breach occurs at an organization with high levels of Shadow AI, the average cost is $670,000 higher.4

- 97% of organizations that did have an AI-related breach admitted they “lacked proper AI access controls”.32 This shows a direct, causal link between a lack of governance and financial harm.

Why is “popular” not the same as “secure”?

There is a dangerous misconception that widely-used AI applications must be enterprise-ready or secure. The “2025 State of Shadow AI Report” from Reco.ai proves the exact opposite is true.4

This is the “Popularity Trap”:

- The Finding: The ten most prevalent Shadow AI apps found in enterprises were found to have “alarmingly poor security”.4

- The Data: The average security score for these popular apps was a low 68 (out of 100).51

- The Offenders: Three of the worst offenders—Jivrus, Happytalk, and Stability AI—received “failing security grades” for lacking fundamental protections like multi-factor authentication (MFA), data encryption, and audit logging.4 Other popular but high-risk tools noted include CreativeX and Otter.ai.4

This data proves that we cannot trust employee choice to manage our risk. Employees choose tools based on features and convenience, not security posture.5

What are the “hidden” Shadow AI threats we are missing?

Beyond the obvious, recent data shows three “hidden” threats that are just as dangerous: vendor dependence, long-term entrenchment, and disproportionate risk for small businesses.

- OpenAI Overdependence: OpenAI (the maker of ChatGPT) services account for 53% of all Shadow AI usage.4 This creates a “classic single point of failure”.4 A security incident at OpenAI, or a sudden change in their data policy, could compromise half of your organization’s shadow AI workflows overnight.

- Long-Term Entrenchment: Shadow AI is not a temporary experiment. The 2025 Reco.ai report found the median usage duration for unapproved tools like CreativeX and System.com was 403 and 401 days, respectively.4 By the time your security team finds the tool, it’s a deeply embedded, “mission-critical” business process, making it politically and operationally impossible to remove.

- Disproportionate Risk: Smaller organizations (11-50 employees) face the highest density of risk, with 269 unsanctioned AI tools per 1,000 employees.4 They lack the security resources to monitor this sprawl, making them prime targets.

Table 1: The 2025 State of Shadow AI (Executive Briefing)

Here’s a summary of the hard numbers. This table tells a complete story: Shadow AI is prevalent, it’s costly, and the tools your employees are choosing are insecure.

| Metric | 2024-2025 Statistic | Implication for Your Business |

| Employee Prevalence | 71% of office workers use unapproved AI tools.4 | The vast majority of your workforce is already operating outside of governance. |

| Sensitive Data Sharing | 38% of employees admit to sharing sensitive data with AI.3 | Your most valuable data (IP, PII) is actively being exfiltrated. |

| Breach Frequency | 20% of organizations have had a data breach from unauthorized AI.4 | This is not a “what if” scenario; it is a “when” and “how often” problem. |

| The “Shadow AI” Breach Tax | +$670,000 average additional cost to a data breach.4 | Ignoring this problem has a direct, six-figure-plus impact on any future security incident. |

| Single Vendor Risk | 53% of all Shadow AI usage is OpenAI (ChatGPT).4 | Your operations are likely dependent on a single, unmanaged vendor. |

| The “Popularity Trap” | 68/100 is the average security score of the most popular shadow apps.51 | Your employees are, by default, choosing the least secure tools. |

| Long-Term Entrenchment | 400+ Days is the median usage duration for embedded shadow tools.4 | Shadow AI is not “testing”; it’s becoming permanent infrastructure, creating massive security debt. |

Part 4: The CISO’s Playbook: A 3-Phase Strategy for Shadow AI Governance

Here is the “solution” part of the report. This is a practical, step-by-step playbook for getting this problem under control—not by “banning,” but by managing and enabling.

Why is “banning” AI the worst possible strategy?

A “ban-only” approach to AI is the worst possible strategy. It is impossible to enforce, it stifles the very innovation you hired your employees for, and it drives the risky behavior further underground, making it invisible.3

Employees are using AI because it provides real value.3 If you block the “front door” (the official tools), they will simply find a “back door” or “open window” (public AI on their personal phones, home computers, etc.).25 This destroys trust and, most importantly, destroys the very visibility you need to manage the risk.

The goal is not elimination; it’s governance.3 You must shift your security posture from “Doctor No” to “Doctor Yes, and here’s how“.41

Phase 1: How do we find Shadow AI? (Achieve Visibility)

You cannot secure what you cannot see.4 The first phase is to achieve total visibility by combining five separate audit methods—tracking money, identity, endpoints, network traffic, and employee behavior.36

Here is your 5-point detection guide:

- Follow the Money (Expense Audits): Check all expense reports and corporate card logs for “micro-transactions”.36 Look for recurring $15-$50 charges from vendors like OpenAI, Midjourney, Anthropic, etc..36 Create a “watch list” of AI vendor names and merchant codes to automate this.

- Follow the Identity (OAuth Audits): Audit your OAuth consent logs from Google and Microsoft.36 Look for suspicious, non-enterprise apps that users have granted broad permissions to, especially Files.ReadWrite.All or Mail.ReadWrite.36 A sudden spike of 10+ users authorizing one app is a red flag for a shadow pilot.36

- Follow the Endpoint (Browser Audits): Audit all managed browser extensions.36 These evade network scans. Look for extensions with broad permissions (activeTab + wildcard host access) 36 or those with “AI,” “GPT,” or “Copilot” in the title.

- Follow the Data (Network Audits): This is where you use your existing security stack:

- Data Loss Prevention (DLP): Deploy tools to identify sensitive data patterns (like PII or source code) being sent to known AI platforms.1

- Web Proxy/CASB/SASE: Monitor all outbound traffic and DNS logs for connections to high-risk AI domains.1

- SIEM: Collect all these logs to detect anomalous patterns of AI misuse.53

- Follow the People (Human Audits): Conduct anonymous surveys to ask employees what tools they use and why.53 This isn’t just a detection method; it’s market research for your internal “AI AppStore.”

Phase 2: How do we create a policy that works? (Define Governance)

Once you have visibility, you must create a clear and practical governance framework. This involves publishing an AI Acceptable Use Policy (AUP) and classifying all AI tools and data into simple, easy-to-understand tiers.3

First, create your AI Acceptable Use Policy (AUP). This is your foundational legal and operational document.58 It must be written for humans, not just lawyers, and must include:

- Approved Tools List: A “Green List” of tools that are vetted and safe to use.3

- Clear Data Handling Rules: What data can never be put into any external AI (e.g., PII, PHI, IP, trade secrets, confidential strategy).1

- Output Verification Mandate: A rule that employees are personally responsible for verifying all AI-generated output for accuracy and bias. The AI is a copilot, not the pilot.60

- A Request Channel: A clear process for employees to request a new AI tool be reviewed.1

Second, classify your tools and data using a simple “traffic light” system that everyone can understand.3

Table 2: Sample AI Tool & Data Classification Framework

This table is a practical, ready-to-use framework you can adapt for your AUP. It translates complex policy into a simple chart for your employees.

| Tier | Tool Category | Data Allowed | Examples |

| Tier 1: Approved (Green Light) | Vetted Enterprise-Grade Tools. (Secure, enterprise-level contract, data not used for training, BAA/DPA in place) | Public & Internal/Confidential. (e.g., strategy docs, internal emails) | • Enterprise ChatGPT • Microsoft Copilot (with Data Protection) • Secure Internal LLM/Sandbox 3 |

| Tier 2: Limited Use (Yellow Light) | Niche/Useful Tools with Known Risks. (Use requires specific approval; no sensitive data) | Public Data ONLY. (e.g., summarizing public web articles) | • Otter.ai (for non-confidential meetings) • Perplexity.ai (for public research) • AI Image Generators (for non-IP concepts) |

| Tier 3: Prohibited (Red Light) | Unvetted or High-Risk Tools. (Consumer-grade, “failing” security, no data controls, known leakers) | NO Corporate Data. (Block at firewall/proxy) | • All “consumer” versions (free ChatGPT, etc.) • Jivrus, Happytalk, Stability AI 4 • Any unapproved AI browser extension |

| Tier 4: Regulated (Red Light +) | PII, PHI, PCI, or Financial Data | NEVER allowed in any external AI. (Must only be used in specialized, internal, air-gapped systems) | • Patient Records • Credit Card Numbers • EU Customer Lists |

Phase 3: How do we safely enable innovation? (The Path to “Yes”)

This is the final and most critical phase. You must solve the root problem by providing secure, powerful, and easy-to-use alternatives to the shadow tools, combined with comprehensive training.1

- Provide Secure Alternatives: This is the single most effective strategy to combat Shadow AI.1

- Internal “AI AppStore”: Create and promote an “allow-list” or internal portal of all your “Tier 1: Approved” tools.3 This makes the safe choice the easy choice.

- Enterprise-Grade Solutions: Pay for the enterprise versions of the tools employees already want, such as ChatGPT Enterprise or Amazon Q. These come with the security, data-training opt-outs, and audit logs you need.3

- Build “AI Sandboxes”: For your most demanding, high-risk users (like R&D, developers, and data scientists), a public tool will never be appropriate. Build them a “walled-off” environment 1 where they can safely experiment with open-source models (like Llama) or build internal models using Retrieval-Augmented Generation (RAG) on your corporate data without that data ever leaving your secure perimeter.3

- Train, Don’t Just Tell: You must educate your entire workforce on this new class of risk.1 This training can’t be a boring compliance video. It must:

- Explain the why (show the Samsung case study).56

- Explain the risks (data leaks, IP loss, hallucinations).25

- Explain the rules (your AUP and “Tier” system).25

- Explain the solutions (the “AI AppStore” and how to request new tools).56

- Foster a Culture of Secure Innovation: Make employees your partners, not your adversaries.

- Safe Reporting: Create a simple channel (e.g., a “Report-a-Tool” button) where employees can submit new AI tools for security review without fear of punishment.3

- Reward Adherence: Recognize teams that follow best practices or contribute to the internal prompt library.3

- Listen to Demand Signals: When you find a shadow tool, your first question shouldn’t be “Who used this?” It should be “What business problem were they trying to solve?”.33 Then, find a secure way to solve it for them. This is how you build trust and become a true business enabler.

Part 5: What Are My Final Recommendations?

Shadow AI is a permanent, new, and dangerous class of risk, but it is manageable. It’s not a technical problem for IT to solve alone; it’s a business-wide strategic risk that requires a 3-part (Visibility, Governance, Enablement) solution led from the top.

Here are the key takeaways:

- Accept Reality: Shadow AI is already in your organization. Your goal is not (and cannot be) to eliminate it. Your goal is to manage it by bringing it out of the shadows and into the light.

- Quantify the Risk: The $670,000 “Shadow AI Tax” on data breaches is a real, measurable cost.4 Use this number to justify investment in a proper governance program and enterprise-grade tools.

- Lead with “Enablement,” Not “Bans”: A “ban-only” policy will fail and make you more blind. Lead with a strategy that enables productivity by providing secure, powerful alternatives. Your message must be: “Yes, we want you to use AI—and here is the safe, powerful way to do it.”

- Change Your “Threat Model”: Your biggest insider risk is no longer a malicious actor; it’s a well-intentioned employee with a public ChatGPT account.8 You must arm them with the right tools and training to be your first line of defense, not your biggest liability.

What’s the most surprising (or worrying) AI tool you’ve seen pop up in your organization, and how are you balancing the need for security with the push for innovation?

Works cited

- Hidden Risks of Shadow AI – Varonis, accessed November 11, 2025, https://www.varonis.com/blog/shadow-ai

- What is shadow AI? Risks and solutions for businesses – Zendesk, accessed November 11, 2025, https://www.zendesk.com/blog/shadow-ai/

- AI Gone Wild: Why Shadow AI Is Your IT Team’s Worst Nightmare – Cloud Security Alliance, accessed November 11, 2025, https://cloudsecurityalliance.org/blog/2025/03/04/ai-gone-wild-why-shadow-ai-is-your-it-team-s-worst-nightmare

- Popular Doesn’t Mean Secure – The 2025 State of Shadow AI Report Findings – Reco, accessed November 11, 2025, https://www.reco.ai/blog/popular-doesnt-mean-secure-the-2025-state-of-shadow-ai-report-findings

- The Hidden Dangers of Shadow AI: What Business Leaders Can’t Afford to Miss – PMsquare, accessed November 11, 2025, https://pmsquare.com/resource/blogs/shadow-ai-guide-for-business-leaders/

- Shadow AI: The Silent Security Risk Lurking in Your Enterprise – F5, accessed November 11, 2025, https://www.f5.com/company/blog/shadow-ai-the-silent-security-risk-lurking-in-your-enterprise

- accessed November 11, 2025, https://www.grip.security/glossary/shadow-ai#:~:text=Shadow%20AI%20in%20cybersecurity%20is,%2C%20access%2C%20and%20data%20protection.

- What Is Shadow AI? – IBM, accessed November 11, 2025, https://www.ibm.com/think/topics/shadow-ai

- Shadow AI & Governance: How Hidden Models Threaten Enterprise Security | by Brij Gupta | Oct, 2025, accessed November 11, 2025, https://medium.com/@gupta.brij/shadow-ai-governance-how-hidden-models-threaten-enterprise-security-eb7c68df127b

- What is Shadow AI? Why It’s a Threat and How to Embrace and Manage It | Wiz, accessed November 11, 2025, https://www.wiz.io/academy/shadow-ai

- Shadow AI Explained: How Your Employees Are Already Using AI in Secret – Medium, accessed November 11, 2025, https://medium.com/@motasemhamdan/shadow-ai-explained-how-your-employees-are-already-using-ai-in-secret-735f94889b6e

- Whoops, Samsung workers accidentally leaked trade secrets via ChatGPT – Mashable, accessed November 11, 2025, https://mashable.com/article/samsung-chatgpt-leak-details

- What is Shadow AI? | Examples and How to Stop It – Grip Security, accessed November 11, 2025, https://www.grip.security/glossary/shadow-ai

- Shadow AI vs. Shadow IT: The Role of SaaS Risk Assessments and Zero Trust for Risk Mitigation – Spin.AI, accessed November 11, 2025, https://spin.ai/blog/shadow-ai-vs-shadow-it-role-of-saas-risk-assessment-zero-trust-risk-mitigation/

- Shadow AI: Examples, Risks, and 8 Ways to Mitigate Them, accessed November 11, 2025, https://www.mend.io/blog/shadow-ai-examples-risks-and-8-ways-to-mitigate-them/

- Shadow AI: Companies struggle to control unsanctioned use of new tools – SiliconANGLE, accessed November 11, 2025, https://siliconangle.com/2025/03/17/shadow-ai-companies-struggle-control-unsanctioned-use-new-tools/

- Shadow AI: Unsanctioned GenAI Tools & Their Oversight – BigID, accessed November 11, 2025, https://bigid.com/blog/shadow-ai/

- How to Detect and Mitigate Shadow AI in Your Organization – Harmonic Security, accessed November 11, 2025, https://www.harmonic.security/blog-posts/how-to-detect-and-mitigate-shadow-ai-in-your-organization

- The Rise of Shadow AI: How To Harness Innovation Without Compromising Security, accessed November 11, 2025, https://bernardmarr.com/the-rise-of-shadow-ai-how-to-harness-innovation-without-compromising-security/

- What is Shadow AI? Risks, Tools, and Best Practices for 2025 – Lasso Security, accessed November 11, 2025, https://www.lasso.security/blog/what-is-shadow-ai

- The Shadow AI Data Leak Problem No One’s Talking About – UpGuard, accessed November 11, 2025, https://www.upguard.com/blog/shadow-ai-data-leak

- A Guide to the Hidden Risks of Shadow AI | Concentric, accessed November 11, 2025, https://concentric.ai/a-guide-to-the-hidden-risks-of-shadow-ai/

- Shadow AI – The Hidden Threat to Governance & Compliance – Structured, accessed November 11, 2025, https://structured.com/blog/shadow-ai-the-hidden-threat/

- Shadow AI: The Hidden Risks of Unsanctioned Artificial Intelligence – Bitrock, accessed November 11, 2025, https://bitrock.it/blog/shadow-ai-the-hidden-risks-of-unsanctioned-artificial-intelligence.html

- Employees Are Embracing ‘Shadow AI’ – and Putting Company Data at Risk | Tanium, accessed November 11, 2025, https://www.tanium.com/blog/employees-are-embracing-shadow-ai-and-putting-company-data-at-risk/

- Shadow AI: The Compliance Risk You Might Be Missing – Pruvent PLLC, accessed November 11, 2025, https://pruvent.com/2025/05/23/shadow-ai-the-compliance-risk-you-might-be-missing/

- Importance of Addressing Shadow AI for HIPAA Compliance, accessed November 11, 2025, https://aihc-assn.org/importance-of-addressing-shadow-ai-for-hipaa-compliance/

- Shadow AI Statistics: How Unauthorized AI Use Costs Companies – Programs.com, accessed November 11, 2025, https://programs.com/resources/shadow-ai-stats/

- Is Your Company’s AI a Waste of Money? The Rise of the Shadow Economy – AiThority, accessed November 11, 2025, https://aithority.com/guest-authors/is-your-companys-ai-a-waste-of-money-the-rise-of-the-shadow-economy/

- The Shadow Costs of AI and How to Bring Them to Light | Built In, accessed November 11, 2025, https://builtin.com/artificial-intelligence/ai-shadow-costs

- Intersys: Awareness, then Action – containing re/insurance’s shadow AI threat, accessed November 11, 2025, https://www.globalreinsurance.com/home/intersys-awareness-then-action-containing-re/insurances-shadow-ai-threat/1456878.article

- ‘Shadow AI’ increases cost of data breaches, report finds | Cybersecurity Dive, accessed November 11, 2025, https://www.cybersecuritydive.com/news/artificial-intelligence-security-shadow-ai-ibm-report/754009/

- The Hidden Cyber Threat Of Shadow AI — And How To Manage It – Centric Consulting, accessed November 11, 2025, https://centricconsulting.com/blog/the-hidden-cyber-threat-of-shadow-ai-and-how-to-manage-it/

- IBM Report: 13% Of Organizations Reported Breaches Of AI Models Or Applications, 97% Of Which Reported Lacking Proper AI Access Controls, accessed November 11, 2025, https://newsroom.ibm.com/2025-07-30-ibm-report-13-of-organizations-reported-breaches-of-ai-models-or-applications,-97-of-which-reported-lacking-proper-ai-access-controls

- Shadow AI In 2025: Five Insights For Security Teams – Forbes, accessed November 11, 2025, https://www.forbes.com/councils/forbestechcouncil/2025/10/24/shadow-ai-in-2025-five-insights-for-security-teams/

- 5 Ways to Detect Shadow AI Apps in Your Organization | Torii, accessed November 11, 2025, https://www.toriihq.com/articles/detect-shadow-ai

- Small Purchases, Big Risks: Shadow AI Use In Government – Forrester, accessed November 11, 2025, https://www.forrester.com/blogs/small-purchases-big-risks-shadow-ai-use-in-government/

- The Business Risk of AI Hallucinations: How to Protect Your Brand | NeuralTrust, accessed November 11, 2025, https://neuraltrust.ai/blog/ai-hallucinations-business-risk

- From Misinformation to Missteps: Hidden Consequences of AI Hallucinations, accessed November 11, 2025, https://seniorexecutive.com/ai-model-hallucinations-risks/

- How can tech leaders manage emerging generative AI risks today while keeping the future in mind? – Deloitte, accessed November 11, 2025, https://www.deloitte.com/us/en/insights/topics/digital-transformation/four-emerging-categories-of-gen-ai-risks.html

- From Shadow AI to Safe Adoption: Guardrails for Enterprise AI, accessed November 11, 2025, https://www.youtube.com/watch?v=Hy9r70Oe_wk

- Shadow AI Explained: Causes, Consequences, and Best Practices for Control – Zylo, accessed November 11, 2025, https://zylo.com/blog/shadow-ai/

- Menlo Security’s 2025 Report Uncovers 68% Surge in “Shadow” Generative AI Usage in the Modern Enterprise, accessed November 11, 2025, https://www.menlosecurity.com/press-releases/menlo-securitys-2025-report-uncovers-68-surge-in-shadow-generative-ai-usage-in-the-modern-enterprise

- What is Shadow AI? | CCC – Copyright Clearance Center, accessed November 11, 2025, https://www.copyright.com/blog/what-is-shadow-ai/

- Gartner Survey Finds Risk Leaders Concerned About Low-Growth Economic Environment and AI Risks in Third Quarter of 2025, accessed November 11, 2025, https://www.gartner.com/en/newsroom/press-releases/2025-11-06-gartner-survey-finds-risk-leaders-concered-about-low-growth-econ-environment-and-ai-risks-in-q3

- Survey highlights Q2 2025 emerging risks: Shadow AI now in the top five, accessed November 11, 2025, https://resilienceforward.com/survey-highlights-q2-2025-emerging-risks-shadow-ai-now-in-the-top-five/

- Shadow IT Statistics: Key Facts to Learn in 2025 – Zluri, accessed November 11, 2025, https://www.zluri.com/blog/shadow-it-statistics-key-facts-to-learn-in-2024

- Shadow AI emerges as significant cybersecurity threat – Financial Management magazine, accessed November 11, 2025, https://www.fm-magazine.com/news/2025/aug/shadow-ai-emerges-as-significant-cybersecurity-threat/

- Newsroom: Check Reco’s Latest Company Updates, accessed November 11, 2025, https://www.reco.ai/newsroom

- New Study Reveals 400+ Days of Hidden AI Tool Usage Across Enterprises, Creating Mounting Data Exposure – Reco AI, accessed November 11, 2025, https://www.reco.ai/blog/new-study-reveals-400-days-of-hidden-ai-tool-usage-across-enterprises-creating-mounting-data-exposure

- 2025 State of Shadow AI Report – Reco AI, accessed November 11, 2025, https://www.reco.ai/state-of-shadow-ai-report

- Don’t Say No, Say How: Shadow AI, BYOD, & Cybersecurity Risks, accessed November 11, 2025, https://www.youtube.com/watch?v=U9Ckc3MecvA

- Shadow AI Explained: Meaning, Examples, and How to Manage It, accessed November 11, 2025, https://www.zscaler.com/zpedia/what-is-shadow-ai

- Unmasking Shadow AI: What Is it and How Can You Manage it? – UpGuard, accessed November 11, 2025, https://www.upguard.com/blog/unmasking-shadow-ai

- AI Access Security – Palo Alto Networks, accessed November 11, 2025, https://www.paloaltonetworks.com/sase/ai-access-security

- Shadow AI: How to Bring Employee AI Use Out of the Dark – KnowledgeCity, accessed November 11, 2025, https://www.knowledgecity.com/blog/shadow-ai-how-to-bring-employee-ai-use-out-of-the-dark/

- Questions From the C-Suite: Shadow AI – STEP Software, accessed November 11, 2025, https://www.stepsoftware.com/questions-from-the-c-suite-shadow-ai/

- How to craft an Acceptable Use Policy for gen AI (and look smart …, accessed November 11, 2025, https://cloud.google.com/transform/how-to-craft-an-acceptable-use-policy-for-gen-ai-and-look-smart-doing-it

- AI Demystified: Crafting an Effective AI Acceptable Use Policy | Phillips Lytle LLP, accessed November 11, 2025, https://phillipslytle.com/ai-demystified-crafting-an-effective-ai-acceptable-use-policy/

- Framework of an Acceptable Use Policy for External … – FS-ISAC, accessed November 11, 2025, https://www.fsisac.com/hubfs/Knowledge/FrameworkOfAnAcceptableUsePolicyForExternalGenerativeAI.pdf

- How to craft an AI acceptable use policy to protect your campus today – EAB, accessed November 11, 2025, https://eab.com/resources/blog/strategy-blog/craft-ai-acceptable-use-policy-protect-campus/

- Navigating the risks of ‘shadow AI’ – BCS, The Chartered Institute for IT, accessed November 11, 2025, https://www.bcs.org/articles-opinion-and-research/navigating-the-risks-of-shadow-ai/

- How to implement shadow AI governance best practices now – Ki Ecke, accessed November 11, 2025, https://ki-ecke.com/insights/how-to-implement-shadow-ai-governance-best-practices-now/

- AI blind spots CIOs can’t afford to ignore – Simpplr, accessed November 11, 2025, https://www.simpplr.com/blog/ai-blind-spots-for-cios/